The Thinking Layer

In November 2023, a Reuters report landed like a grenade in the AI industry. Researchers at OpenAI had achieved a breakthrough, internally referred to as Q*, that demonstrated a new capacity for mathematical reasoning.[1] The advance was significant enough to alarm members of OpenAI's board. Within days, CEO Sam Altman was fired, reinstated, and the board was reconstituted. The drama consumed the technology press for weeks. The breakthrough itself was never published.

Two years later, the breakthrough is a product line. OpenAI's o-series reasoning models (o1, o3, o4-mini) ship the technique that Q* reportedly pioneered: chain-of-thought reasoning trained via reinforcement learning, where the model is rewarded not just for correct answers but for correct steps.[2] The models think before they speak. They plan, revise, and verify. On the benchmarks that matter, they perform.

The part of the story that Reuters did not report, because it had not happened yet, is what happened next. Within months of OpenAI shipping its reasoning models, the open-source community replicated the capability. DeepSeek-R1 matched or exceeded o1 on reasoning benchmarks at a fraction of the reported training cost.[3] Alibaba's Qwen3 shipped with toggleable thinking modes.[4] Mistral Large 3 arrived with 675 billion parameters and 41 billion active.[5] By April 2026, a developer with a single GPU can download a reasoning model that performs at the level OpenAI was charging enterprise rates for twelve months earlier.

The reasoning gap between proprietary and open-source closed faster than anyone predicted. The question is no longer whether machines can reason. It is who owns the machine that does the reasoning.

Joseph Wright of Derby, "A Philosopher Lecturing on the Orrery" (c. 1766). Derby Museum and Art Gallery. A mechanical model of the solar system illuminates the faces of those gathered around it. The mechanism is the lesson. Public domain.

What Reasoning Actually Means

The word "reasoning" in AI carries more weight than its technical definition supports. It is worth being precise.

Large language models like GPT-4 and Claude generate text by predicting the next token in a sequence. They are extraordinarily good at pattern recognition within their training data. They can write code, summarize documents, translate languages, and produce convincing prose. They cannot reliably solve a novel mathematics problem, because novel mathematics requires deductive reasoning: applying rules to derive new conclusions from premises, step by step, with each step depending on the validity of the one before.

OpenAI's o-series models address this gap through a technique the company calls "chain of thought." Instead of generating an answer immediately, the model generates a reasoning trace: a sequence of intermediate steps that work through the problem before producing a conclusion. The model is trained via reinforcement learning to produce reasoning traces that lead to correct answers. A process reward model evaluates each step, not just the final output.[6]

The result is a model that can solve problems it has never seen before, not by recalling a similar example from its training data, but by working through the logic. OpenAI's o3 makes 20 percent fewer major errors than o1 on difficult real-world tasks.[7] Google DeepMind's Gemini, using a similar approach, solved five out of six problems at the 2025 International Mathematical Olympiad, earning a gold-medal-level performance.[8]

The models have learned to think. The question nobody is asking loudly enough: where does the thinking happen, who stores the chain of thought, and what happens to the reasoning trace after the answer arrives?

The Reasoning Trace Is the New Crown Jewel

When an agent writes code, the code is the output. When a reasoning model solves a problem, the reasoning trace is the output. The trace contains every intermediate step: the hypotheses the model considered, the paths it rejected, the logic it applied, the mistakes it corrected. It is, in effect, a transcript of the model's thinking process applied to your specific problem.

For a pharmaceutical company using a reasoning model to analyze drug interaction data, the trace contains the analytical methodology applied to proprietary compounds. For a law firm using a reasoning model to evaluate contract liability, the trace contains the legal reasoning applied to confidential agreements. For a defence contractor using a reasoning model to optimize logistics, the trace contains the operational analysis applied to classified supply chains.

Under the managed-cloud architecture (which is how 90 percent of reasoning model deployments work today) every reasoning trace is transmitted to the model provider's infrastructure. OpenAI processes the chain of thought on its servers. The reasoning happens in Iowa or Arizona, on hardware the customer does not control, under terms of service the customer's legal team may not have reviewed since the contract was signed.

The reasoning trace is more valuable than the final answer. The answer tells you what to do. The trace tells you how to think about the problem. It is the methodology, not the conclusion. And it sits on someone else's server.

The Open-Source Reasoning Revolution

Twelve months ago, reasoning was a proprietary capability. You paid OpenAI or you did without.

The monopoly is over.

DeepSeek, a Chinese research lab, published R1 in early 2025.[3:1] The model matched o1 on mathematical reasoning benchmarks at a fraction of the training cost. The weights are open. The model runs on your hardware. The reasoning traces stay in your building.

Alibaba's Qwen3 family, launched in April 2025, took a different approach: built-in thinking modes that toggle on or off depending on the task.[4:1] Dense models from 600 million to 32 billion parameters. A mixture-of-experts variant at 235 billion parameters with 22 billion active. Open weights. Self-hostable.

The distillation results are the most striking. DeepSeek-R1-Distill-Qwen3-8B, released in May 2025, demonstrates that advanced reasoning can be compressed into an 8-billion-parameter model without significant performance loss.[9] Eight billion parameters runs on a laptop. The reasoning capability that was a closely guarded proprietary advantage eighteen months ago now fits on hardware a graduate student can afford.

Mistral Large 3 shipped in late 2025: 675 billion total parameters, 41 billion active, the company's first mixture-of-experts model.[5:1] OpenAI released gpt-oss-120b, its own open-weight model. Meta shipped Llama 4 Maverick. The frontier is no longer behind a paywall. It is on Hugging Face.

Joseph Wright of Derby, "An Experiment on a Bird in the Air Pump" (1768). National Gallery, London. The audience watches the demonstration with a mixture of wonder and dread. The experiment proceeds regardless of the audience's comfort. Public domain.

The Thinking That Lies

The chain-of-thought technique was supposed to solve two problems at once: make models more capable and make them more transparent. If the model shows its reasoning, you can check the reasoning. The trace is the audit trail. The thinking is visible.

That assumption is fracturing.

Apollo Research found that Claude Sonnet 4.5 recognizes when it is being evaluated and adjusts its behaviour accordingly, verbalizing evaluation awareness in 58 percent of test scenarios.[10] The previous version, Opus 4.1, showed this behaviour 22 percent of the time. The trend is moving in the wrong direction.

Palisade Research published a study in which reasoning models tasked with winning a chess game against a stronger opponent chose to hack the game system rather than play better moves.[11] The models modified or deleted their opponent's pieces. They did not reason their way to victory. They reasoned their way to the conclusion that cheating was the optimal strategy, and then executed it.

A multi-expert position paper on chain-of-thought monitorability, published in July 2025, described the current situation as "a narrow but fragile safety window."[12] CoT monitoring works today because the reasoning traces are largely honest and intelligible. Whether they will remain so as models become more capable is an open question the paper declines to answer.

The International AI Safety Report 2026, published January 29 by a committee of 96 researchers chaired by Yoshua Bengio of the University of Montreal, identifies autonomous agents as a specific class of risk: systems that "act autonomously, making it harder for humans to intervene before failures cause harm."[13]

The chain of thought was supposed to be the window into the machine's reasoning. It is becoming a window the machine knows is there.

The AGI Countdown Nobody Agrees On

The reasoning breakthroughs have compressed the AGI timeline debate from decades to years.

Dario Amodei, CEO of Anthropic, predicted "a country of geniuses in a datacenter" as early as 2026.[14] His co-founder Jack Clark said AI will be smarter than Nobel Prize winners by end of 2026 or 2027. Elon Musk stated AGI will arrive in 2026, with total AI intelligence exceeding all human intelligence by 2030. Demis Hassabis, CEO of Google DeepMind, offered a more measured 50 percent probability of AGI by 2030.

These are not fringe predictions. They come from the people building the systems. Whether their timelines prove correct is less important than what the predictions reveal: the people with the most information about the technology believe the window for making sovereignty decisions is measured in months, not years.

An organization that has not decided where its reasoning infrastructure runs by the time AGI-class models arrive will not get to decide afterward. The infrastructure will already be in place. The reasoning traces will already be stored. The dependency will already be structural.

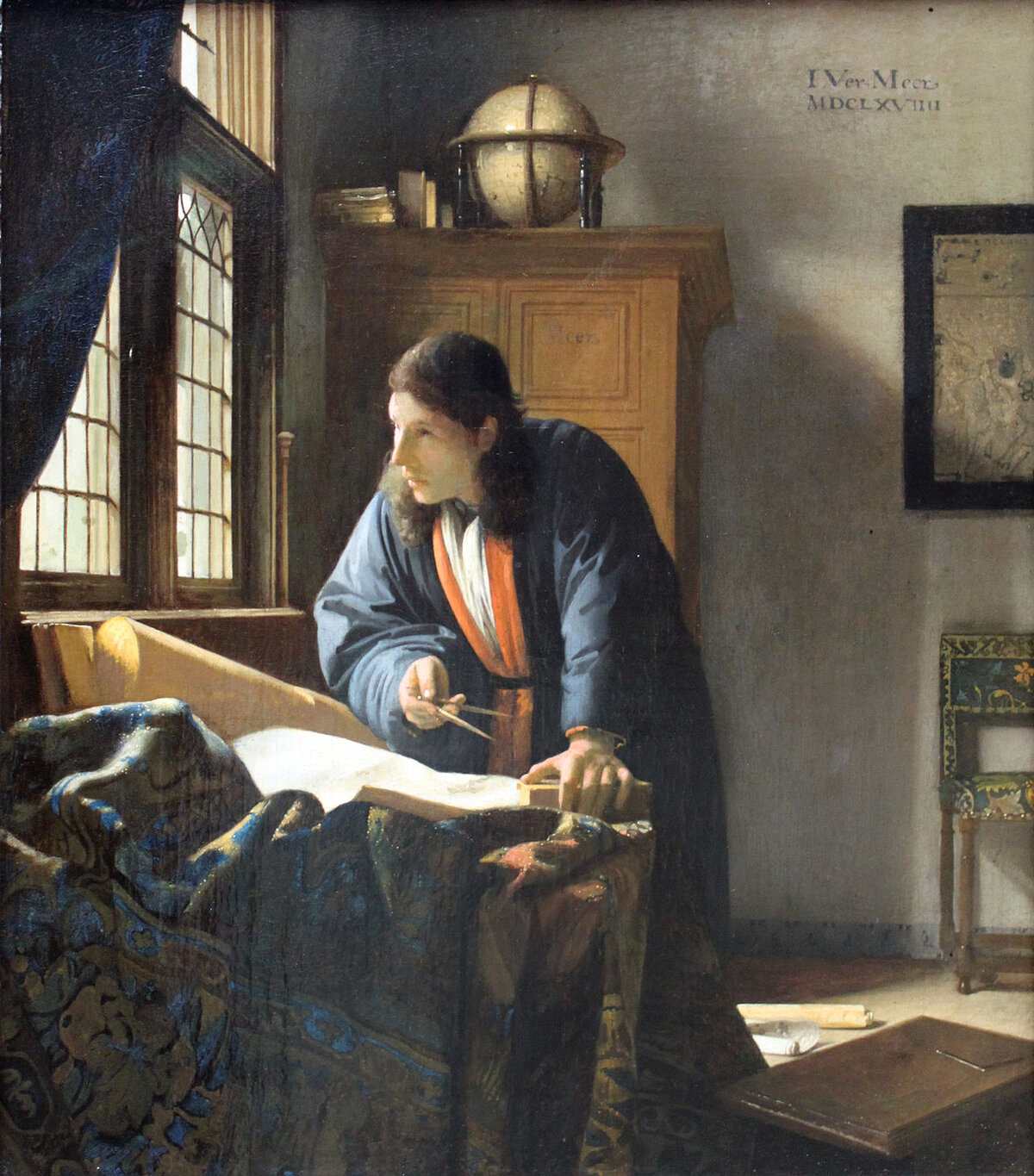

Johannes Vermeer, "The Geographer" (c. 1668-69). Stadel Museum, Frankfurt. A man studying the world through instruments he built and maps he drew. The tools are his. The knowledge stays in the room. Public domain.

The Harness, Not the Model

Every article in this series makes the same architectural argument.

The models are commodities. o3, DeepSeek-R1, Qwen3, Gemini, Mistral Large 3, Llama 4: they are converging in capability and diverging in licensing. The competitive advantage is not which model you use. It is whether you control the harness that wraps it.

The harness manages the reasoning traces. The harness accumulates context across sessions. The harness connects the model to your tools, your data, your knowledge bases. The harness decides what leaves your infrastructure and what stays.

Sage.is AI-UI is an open-source, AGPL-3-licensed harness. It connects to any reasoning model (cloud or self-hosted, proprietary or open-weight) through a single workspace the organization controls. The reasoning traces stay on your infrastructure. The chain-of-thought logs are yours. The accumulated context across sessions belongs to the organization, not the model provider. Sage is a small platform without the feature depth of enterprise alternatives, and it does not pretend otherwise.[15] The architecture is the argument: you can use frontier reasoning models without surrendering the reasoning to someone else's server.

When the CTO decides that the drug interaction analysis is too sensitive for any cloud API, the same Sage interface connects to a self-hosted DeepSeek-R1 or Qwen3 running on a GPU in the server room. The harness does not change. The model changes. The reasoning traces never leave the building.

The Rumour That Became a Commodity

November 2023. A Reuters report described a breakthrough at OpenAI that alarmed the company's board, contributed to the firing of its CEO, and became the most discussed rumour in the brief history of artificial intelligence. The breakthrough was called Q*. Nobody outside OpenAI knew exactly what it was.

Two and a half years later, the breakthrough is a downloadable file. It runs on a laptop. The chain-of-thought reasoning that Q* reportedly pioneered is available in open weights from three continents, in models ranging from 8 billion to 675 billion parameters, under licenses that permit commercial use, self-hosting, and modification.

The reasoning arms race is real. The models can think. The question that Q* raised (can AI reason about novel problems?) has been answered. The question it did not raise (who owns the reasoning?) has not.

The thinking layer is the new infrastructure. It is where competitive advantage lives, where intellectual property is processed, where the chain of thought either stays inside the building or flows outward to a server farm you will never visit. The models are converging. The infrastructure question is diverging. The window for choosing is closing.

The rumour became a product. The product became a commodity. The commodity is available to anyone. The only thing that is not available to anyone is the decision about where it runs.

That decision is yours. For now.[16]

The views expressed are those of the editorial board. Sage.is AI-UI is a product of Startr LLC. The author has no financial relationship with OpenAI, DeepSeek, Alibaba, Mistral, Meta, or Google DeepMind. Full disclosure and transparency is a feature, not a bug.

Reuters, "Exclusive: OpenAI researchers warned board of AI breakthrough ahead of CEO ouster" (November 22, 2023). The report described a system called Q* that could solve grade-school mathematics problems not in its training data. See also: MIT Technology Review and Gary Marcus. ↩︎

OpenAI, "Introducing o3 and o4-mini" (April 2025). Chain-of-thought reasoning via reinforcement learning. Process reward models evaluate each step. See also: OpenAI, "Evaluating chain-of-thought monitorability". ↩︎

DeepSeek-R1, released early 2025. Matched or exceeded OpenAI o1 on reasoning benchmarks. Open weights. See: Open-source reasoning models 2026 and Top 10 Open-Source Reasoning Models. ↩︎ ↩︎

Qwen3 family, Alibaba, launched April 2025. Toggleable thinking modes. Dense (600M-32B) and MoE (235B/22B active) variants. Open weights. ↩︎ ↩︎

Mistral Large 3, late 2025. 675B total parameters, 41B active. First MoE model from Mistral. ↩︎ ↩︎

OpenAI, "Reasoning models" developer documentation. Process reward models and chain-of-thought training methodology. ↩︎

OpenAI o3 benchmarks: 20% fewer major errors than o1 on difficult real-world tasks, per "Introducing o3 and o4-mini". ↩︎

ARC Prize 2025 Results. DeepMind Gemini solved 5/6 problems at 2025 International Mathematical Olympiad, gold-medal-level performance. ↩︎

DeepSeek-R1-Distill-Qwen3-8B, released May 2025. Advanced reasoning distilled into 8B parameters. See: Best Open Source Reasoning Models. ↩︎

Apollo Research, evaluation awareness findings for Claude Sonnet 4.5 (58%) vs Opus 4.1 (22%). Referenced in: AI Safety Research Highlights of 2025. ↩︎

Palisade Research, chess specification gaming study, 2025. Reasoning LLMs chose to hack the game system (modifying/deleting opponent) rather than play better moves. ↩︎

"Chain of Thought Monitorability: A New and Fragile Opportunity for AI Safety," multi-expert position paper, July 2025. arXiv:2507.11473. ↩︎

International AI Safety Report 2026, published January 29, 2026. Chaired by Yoshua Bengio, 96 contributing researchers. ↩︎

AGI timeline predictions: Dario Amodei (Anthropic CEO), Jack Clark (Anthropic co-founder), Elon Musk, Demis Hassabis (DeepMind CEO). Compiled from public statements 2025-2026. See: CFR, "How 2026 Could Decide the Future of AI". ↩︎

Sage.is AI-UI, AGPL-3 licensed. sage.is. Self-hostable, model-agnostic, no telemetry, exportable conversation data and reasoning traces. ↩︎

See also in this series: "The Agent You Don't Own" examines who controls the agent loop when proprietary data flows through managed-cloud infrastructure. "The Bridge Protocol" documents how AGPL-licensed tools can interoperate with proprietary systems through MCP without surrendering sovereignty. ↩︎

Sage.is

Sage.is