On a Tuesday morning in October 2024, a research team at Simular AI in San Francisco pointed an agent at a Linux desktop and told it to do something mundane: open LibreOffice Calc, import a CSV file, filter for rows where revenue exceeded a threshold, create a chart, and export the result as a PDF. Five clicks, two keyboard shortcuts, a drag-select, a menu dive, and a save dialog. The kind of task an administrative assistant does without thinking and a machine had never reliably done at all.

The agent completed the task. It didn't call an API, nor did it run some script. Instead, it looked at the screen, identified the buttons, moved the cursor, clicked, typed, waited for the interface to respond, and clicked again. It used the computer the way a person does, if a person had never seen a computer before and was working from a combination of written instructions and a vague memory of having done something similar once. It succeeded about 20 percent of the time.

Fourteen months later, the same team's successor system hit 72.6 percent on OSWorld, a benchmark of real desktop tasks across Ubuntu, Windows, and macOS. The human baseline on that benchmark is 72.36 percent.

The agent passed the human.

Ang Li, Simular's CEO, described it as crossing a historic threshold: "It wasn't clear whether AI could reliably use a computer the way humans do."[1] The number appeared in a December 16, 2025 announcement alongside a partnership with Microsoft to pilot the technology on Windows 365 for Agents.[2] Simular, founded in 2023, had raised $3.5 million in seed funding. The implication was unmistakable. A four-person research team in a seed-stage startup had built a system that matched human performance at operating a computer.

While numbers are real what it means is more complicated than anyone selling agent software would like to admit.

The Architecture of a Click

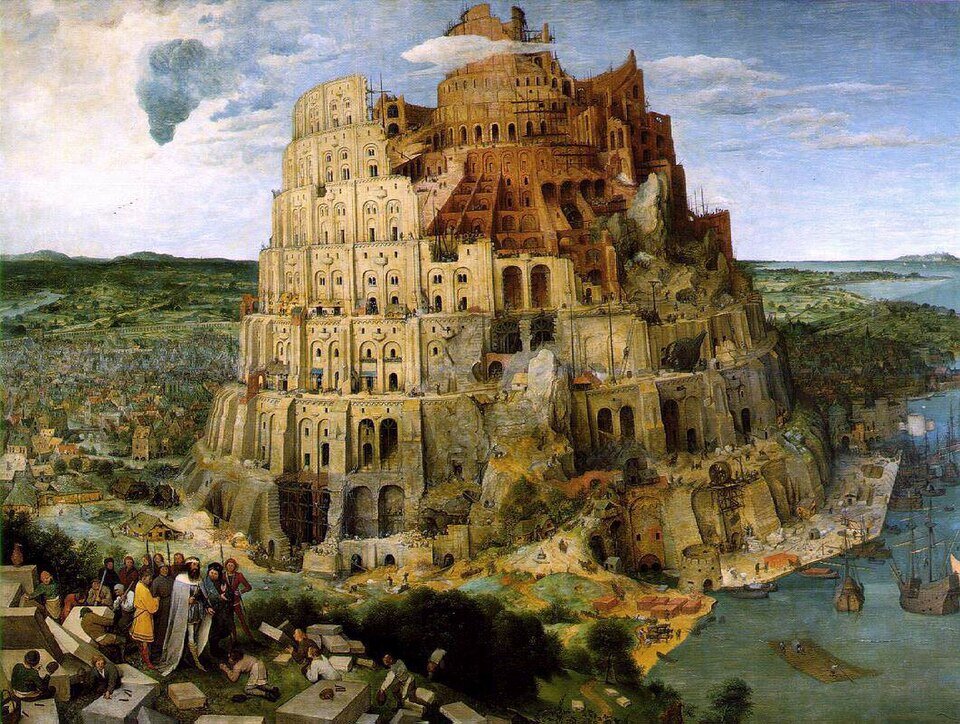

Pieter Bruegel the Elder, "The Tower of Babel" (1563). Kunsthistorisches Museum, Vienna. An impossible structure rising through parallel effort on every level. Public domain

The Agent S paper, published on October 10, 2024 by Saaket Agashe, a PhD researcher at Ohio State University, along with colleagues Jiuzhou Han, Shuyu Gan, Jiachen Yang, and Ang Li, introduced three ideas that became the architectural spine of every serious computer-use agent that followed.[3]

The first was hierarchical planning. A Manager agent receives the task and decomposes it into ordered subtasks. A Worker agent executes each subtask, one action at a time. When the Worker finishes a subtask or fails, the Manager reassesses. This is not a single model trying to hold a 47-step procedure in context. Instead it is two layers of reasoning, with the Manager adjusting the plan as conditions change. The same principle that makes military command structures work (no general tells a sergeant where to place each footstep) applied to software.

The second was dual memory. The system maintains what the researchers call narrative memory (high-level summaries of past tasks: "I have done spreadsheet exports before; the save dialog had a dropdown for format selection; it's best to use plain text") and episodic memory (specific action sequences that worked: "In LibreOffice, File, then Export as PDF, then confirm"). Both are retrieved at inference time and injected into the prompt. The agent "learns" from experience without retraining. It remembers what worked.

The third was a proper interface between the agent and the computer. Instead of relying solely on screenshots (which are ambiguous, because a button that looks like a link might be a dropdown) or solely on the accessibility tree (which is brittle, because not every application exposes one), Agent S uses both. The screenshot tells it what the screen looks like. The accessibility tree tells it what each element is and how to interact with it. Visual understanding for context. Structured data for precision.

None of these ideas are individually original. Hierarchical task decomposition is from the 1970s. Memory-augmented agents have been explored for years. Multimodal perception is table stakes. What the paper demonstrated is that combining them in a coherent architecture, pointed at a real operating system with real applications where the file dialog sometimes takes two seconds to render and the button you need is behind a dropdown you did not expect, produces an agent that actually works.

An agent that's able to work not in simulation but on a live desktop.

The Scaling Trick Nobody Expected

The jump from 20 percent to 72.6 percent did not come from a better model. It came from a better strategy for using many models at once.

The technique is called Behavior Best-of-N. Instead of running one agent on a task and hoping it succeeds, you run N agents on the same task in parallel. Each agent takes its own path (different clicks, different orderings, different recovery strategies when something goes wrong). When all N runs finish, a judge model reads a structured summary of what each agent did and picks the best outcome.

That is the entire technique. No ensembling. No voting. No gradient averaging. Let several agents try. Watch what they do. Pick the winner.

The results are difficult to dismiss. Agent S3, Simular's third-generation system, scores 62.6 percent on OSWorld with a single run.[4] Add Behavior Best-of-N and the score rises to 72.6 percent. The final ten-point jump (the difference between "promising research" and "matches humans") came entirely from running multiple agents and selecting among them. No architectural change. No new training data. Just more attempts and a good judge.

The machine does not need to be right on the first try. It needs to be right on one of the tries, and it needs a way to know which one.

The pattern is not new. It is the same insight behind DeepMind's AlphaCode (generate millions of programs, filter for the ones that pass tests), behind best-of-N sampling in reinforcement learning, and behind the brute-force iteration argument that diffusion language models are beginning to make real. The principle keeps surfacing because it works: when verification is cheaper than generation, generate more and verify harder.

The compute economics are counterintuitive. Ten parallel agent runs cost ten times as much as one. At current API pricing, a single complex desktop task might cost $0.50 to $2.00 in model inference. Ten parallel runs cost $5.00 to $20.00. But if the success rate jumps from 62.6 percent to 72.6 percent, the cost per successful task drops, because you stop paying for retries, human fallback, and the $40 to $80 per hour that a human operator costs to do the same work. The scaling is not free. It is often cheaper than the alternative.

The Mirror and the Inbox

Jan van Eyck, "The Arnolfini Portrait" (1434). National Gallery, London. The convex mirror in the background shows the scene from a different angle. Benchmarks and production are two views of the same room. Public domain

Here is where the honest analysis begins.

OSWorld is a well-designed benchmark. The tasks are real (install software, configure system settings, process files, navigate applications). The desktop is real (a virtual machine running actual operating systems) and the evaluation is functional (did the file get created with the right content? does the spreadsheet contain the correct values?). It is the best benchmark available for measuring whether an agent can use a computer.

It is also a benchmark. And benchmarks have three properties that production desktops do not.

The tasks are bounded. OSWorld tasks have clear start states, clear end states, and defined scope. A real user's instruction ("clean up my email and reschedule the conflicting meetings") has ambiguous boundaries, undefined success criteria, and requires judgment calls the benchmark never tests. The benchmark knows what "done" looks like. The inbox never does.

The environment is controlled. The virtual machine starts fresh. No unexpected popups. No software updates mid-task. No second monitor. No VPN reconnection dialog. No colleague's Slack message stealing focus. No "your session has expired, please log in again." Production desktops are hostile environments, and every unexpected dialog is a fork in a decision tree the agent has never encountered.

Failure is consequence-free. When an agent fails on OSWorld, nothing happens. The VM resets. The next task loads. When an agent fails on a production desktop (clicks the wrong button, sends the wrong email, deletes the wrong file, submits the wrong form, confirms the wrong purchase), the consequences are real and sometimes irreversible.

Only 8.6 percent of companies report having AI agents deployed in production.[5] Arun Chandrasekaran, a distinguished vice president analyst at Gartner in Stamford, Connecticut, published a forecast in late 2025 predicting that by the end of 2027, more than 40 percent of agentic AI projects will fail or be canceled due to escalating costs, unclear business value, or insufficient risk controls.[6] Forty-six percent of enterprise respondents in Deloitte's 2026 State of AI survey cite integration with existing systems as their primary adoption challenge.[7] These are not the numbers of a technology that has arrived. They are the numbers of a technology that works in the lab and struggles in the building.

The gap is not surprising. It is the normal trajectory of every technology that crosses a benchmark threshold before crossing a deployment threshold. Speech recognition "matched humans" on specific datasets years before it could handle a phone call with background noise. Self-driving cars "matched humans" on highway benchmarks years before they could navigate a construction zone. The benchmark is the beginning of the hard work, not the end.

The Safety Surface Nobody Has Mapped

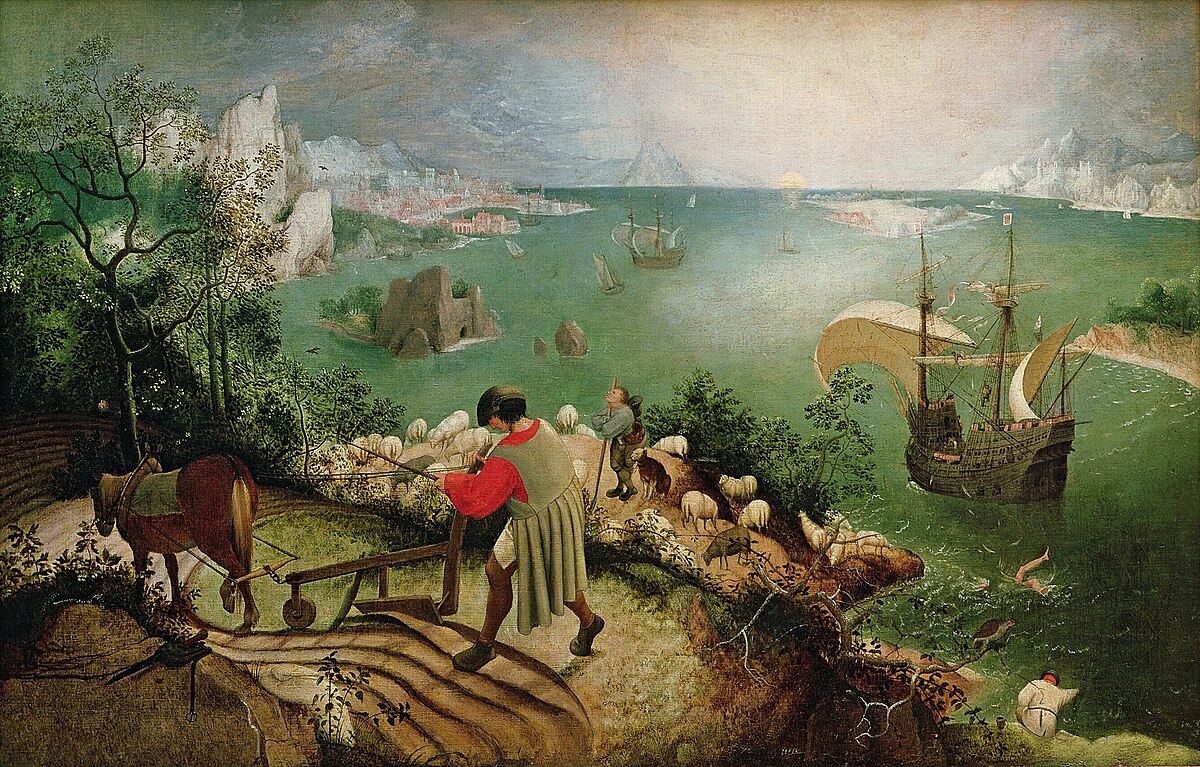

Pieter Bruegel the Elder, "Landscape with the Fall of Icarus" (c. 1560). Royal Museums of Fine Arts of Belgium. A boy falls from the sky into the sea. The farmer keeps plowing. The ship sails on. Public domain

Coding agents operate in sandboxes. They write code, run tests, and the blast radius of a mistake is a failed build. Computer-use agents operate on live desktops. They have the mouse and keyboard. The blast radius of a mistake is whatever the mouse and keyboard can reach.

The International AI Safety Report 2026, published on January 29 by a committee of 96 researchers chaired by Yoshua Bengio of the University of Montreal, identifies a specific class of risk that computer-use agents embody: systems that "act autonomously, making it harder for humans to intervene before failures cause harm."[8] Current mitigation techniques, the report notes, "can reduce failure rates but not to the level required in many high-stakes settings." Prompt injection attacks remain effective against major models with relatively few attempts.

The OWASP Top 10 for Agentic Applications, released on January 16, 2026, names the new attack surface plainly: the industry is "no longer securing what AI says, but what AI does."[9] When an agent has system-level privileges to execute real-world actions and manage persistent memory, the report concludes, "traditional application security principles prove inadequate."

Consider what a computer-use agent requires to be useful. Mouse and keyboard control: it can navigate any application, and it can also click "Send," "Delete," or "Confirm Purchase." Screen reading: it can understand application state, and it can also read passwords, private messages, and financial data visible on screen. File system access: it can open and save documents, and it can also read, modify, or delete anything the user can access. Network access: it can use web applications, and it can also submit forms, send emails, and make API calls as the user.

Every permission granted to make the agent effective is a permission that can be exploited, misused, or simply exercised incorrectly by a system that is right 72.6 percent of the time and wrong the rest.

OpenAI's Operator addresses this with a "takeover mode" in which the agent pauses and asks for manual handling of sensitive inputs (passwords, payment details, confirmations). Anthropic's computer-use implementation constrains actions to safe primitives. Agent S uses the accessibility tree to limit interactions to known interface elements rather than arbitrary pixel coordinates.

These are guardrails, not solutions. The fundamental tension is that a computer-use agent becomes more useful as it gets more access and more dangerous for exactly the same reason. A 27.4 percent failure rate on a benchmark is a research result. A 27.4 percent failure rate on a live desktop is a catastrophe.

The Verification Problem

Anyone building agent systems has already discovered this, but computer-use agents make it visceral: the bottleneck is not generation but verification.

A coding agent can verify its work by running the test suite. A computer-use agent has no equivalent. There is no test for "did the email go to the right person." There is no assertion for whether the attachment was correct. The form submission may have succeeded or silently failed because a required field was left empty behind a scroll, and the agent has no way to know. Post-hoc verification (checking after the action) means the email has already been sent, the file has already been deleted, the form has already been submitted. For irreversible actions, post-hoc is too late.

What the architecture needs is pre-action verification: before the agent clicks "Send," something checks whether the email is addressed correctly, whether the content matches the user's intent, whether the action is reversible. This is the "takeover mode" idea generalized into an architectural principle. Every action classified by consequence and reversibility before execution. Safe actions proceed. Risky actions pause for confirmation. Dangerous actions block entirely.

No current system fully implements this. The ones that come closest treat it as a safety layer bolted onto the agent. The argument this research makes, implicitly, is that verification is not a layer. It is the product. Whoever solves pre-action verification for desktop agents will own the deployment story. Everyone else will own the benchmark story.

The machine has learned to click. It has not learned to ask whether it should.

The Harness, Not the Model

This is where the architectural insight connects to something practical.

The models underneath these agents are commodities. Agent S runs on GPT-4o. Agent S3 runs on GPT-5. The same models power every other agent framework, every chatbot, every coding assistant. If the model is the commodity, the competitive advantage lies in what wraps it: the harness that decomposes tasks, manages memory, routes actions through verification, and connects the model to the systems it needs to operate.

Isabelle Plante, co-founder and CEO of Startr LLC, the eighteen-year-old, zero-venture-capital company behind the Sage.is AI-UI platform, has been making this argument since before the agent era began: "The harness is the product, not the model." Sage.is AI-UI was built on this premise. It is an open-source, AGPL-3-licensed platform that connects to whatever models an organization chooses (Claude, GPT, Gemini, DeepSeek, Llama, Mistral, locally hosted models), through a single workspace the organization controls. The platform does not send data to a training pipeline. It does not retain conversations for model improvement. It does not grant a third party rights to intellectual property.

For computer-use agents, this architecture is not optional. It is a prerequisite. An agent that operates on a live desktop has access to everything on that desktop: emails, files, credentials, financial data, proprietary documents. The question of where that agent's observations go (to the user's infrastructure, or to a cloud provider's servers) is not a privacy nicety. It is the difference between a useful tool and a surveillance liability.

The dual memory system that Agent S pioneered (narrative memory for high-level patterns, episodic memory for specific procedures) is the computer-use equivalent of the data moat. An agent that remembers how your specific desktop is configured, which applications you prefer, and which workflows you use will outperform a generic agent on your tasks, even if the generic agent scores higher on OSWorld. That memory is competitive advantage. It should not leave the building.

Sage's architecture (self-hosted or managed, multi-model, data-sovereign) is designed for exactly this scenario. Knowledge bases powered by retrieval-augmented generation let teams build domain-specific agent behavior without sending proprietary information outside the organization's perimeter. The harness owns the memory. The organization owns the harness.

Where the Click Lands

Raphael, "The School of Athens" (1509-1511). Apostolic Palace, Vatican City. Every thinker in one room, each with a different method. Public domain

The trajectory from 20 percent to 72.6 percent in fourteen months is extraordinary. The architectural ideas (hierarchical planning, memory-augmented learning, multimodal perception, best-of-N scaling) are sound and composable. They will improve. The models underneath them will improve. The benchmarks will get harder and the agents will keep climbing.

But the deployment gap is real, and it is not primarily a capability gap. It is a trust gap. Coding agents earned trust because their blast radius is contained (a failed test, a broken build, a reverted commit). Computer-use agents need to earn trust on a desktop where the blast radius is the user's entire digital life.

The path forward is constrained deployment first: agents that operate within a single application (just the browser, just the spreadsheet, just the email client) rather than the full desktop. Smaller blast radius, easier verification, faster trust-building. Then pre-action verification as a first-class architectural primitive, not a bolt-on safety feature. Then memory as competitive advantage, accumulated on infrastructure the organization controls.

On a Tuesday morning in October 2024, an agent at Simular AI looked at a Linux desktop and tried to export a spreadsheet as a PDF. It failed four times out of five. Fourteen months later, a descendant of that agent matches human performance on a benchmark designed to measure exactly this capability. Fourteen months after that, the question will not be whether the machine can click. It will be whether anyone has built the verification, safety, and trust infrastructure to let it click on something that matters.

That infrastructure (not the agent, not the model, not the benchmark score) is the product. And it does not exist yet.

The views expressed are those of our editorial board and do not necessarily reflect the positions of any institution mentioned. Full disclosure and transparency is a feature, not a bug.

Ang Li, CEO of Simular AI. Quoted in Simular's December 16, 2025 announcement of Agent S3 benchmark results. ↩︎

Simular AI and Microsoft partnership announcement for Windows 365 for Agents pilot, December 2025. ↩︎

Saaket Agashe, Jiuzhou Han, Shuyu Gan, Jiachen Yang, Ang Li. "Agent S: An Open Agentic Framework that Uses Computers Like a Human." arXiv, October 10, 2024. ↩︎

Agent S3 benchmark results: 62.6% single-run, 72.6% with Behavior Best-of-N on OSWorld. Human baseline: 72.36%. Simular AI, December 2025. ↩︎

Enterprise AI agent deployment data, 2025. Various industry surveys including Gartner and McKinsey. ↩︎

Arun Chandrasekaran, Distinguished VP Analyst, Gartner. Forecast published late 2025: 40%+ of agentic AI projects to fail or be canceled by end of 2027. ↩︎

Deloitte, "State of AI in the Enterprise" (2026 edition). 46% of respondents cite systems integration as primary adoption challenge. ↩︎

International AI Safety Report 2026, published January 29, 2026. Chaired by Yoshua Bengio, University of Montreal. 96 contributing researchers. ↩︎

OWASP, "Top 10 Risks for Agentic Applications," released January 16, 2026. owasp.org. ↩︎

Sage.is

Sage.is