The Agent You Don't Own

On a Thursday morning in late February, a DevOps engineer named Daniel Reeves sat in a windowless office in Portland and watched an AI agent refactor his company's authentication microservice. The agent read the codebase, identified a race condition in the session handler, wrote a patch, ran the test suite, caught a failing integration test, revised the patch, and pushed a clean commit to the staging branch. The entire cycle took four minutes and eleven seconds. Daniel reviewed the diff, approved the merge, and moved on to his next ticket.

He did not write a single line of code. He did not need to. The agent (running GPT-5.4 inside an OpenClaw-style loop) had planned the work, executed it, inspected the results, and corrected its own mistakes. Daniel's role was to say yes.

Two floors up, his CTO was on a call with the company's legal counsel, trying to understand what had just happened. Not the technical part. The part where their proprietary authentication logic, their session management architecture, and the specific race condition that had cost them a $200,000 production outage last November had all been transmitted to OpenAI's API, processed on infrastructure the company did not control, under terms of service the CTO had not read since the account was created.

The agent worked. The code shipped. The vulnerability that only Daniel's team knew about now existed, in some form, on a server farm in Iowa.

Daniel Reeves is not careless. He is the future of software engineering. And the infrastructure he depends on is designed to make sure he never owns the thing that does his thinking.

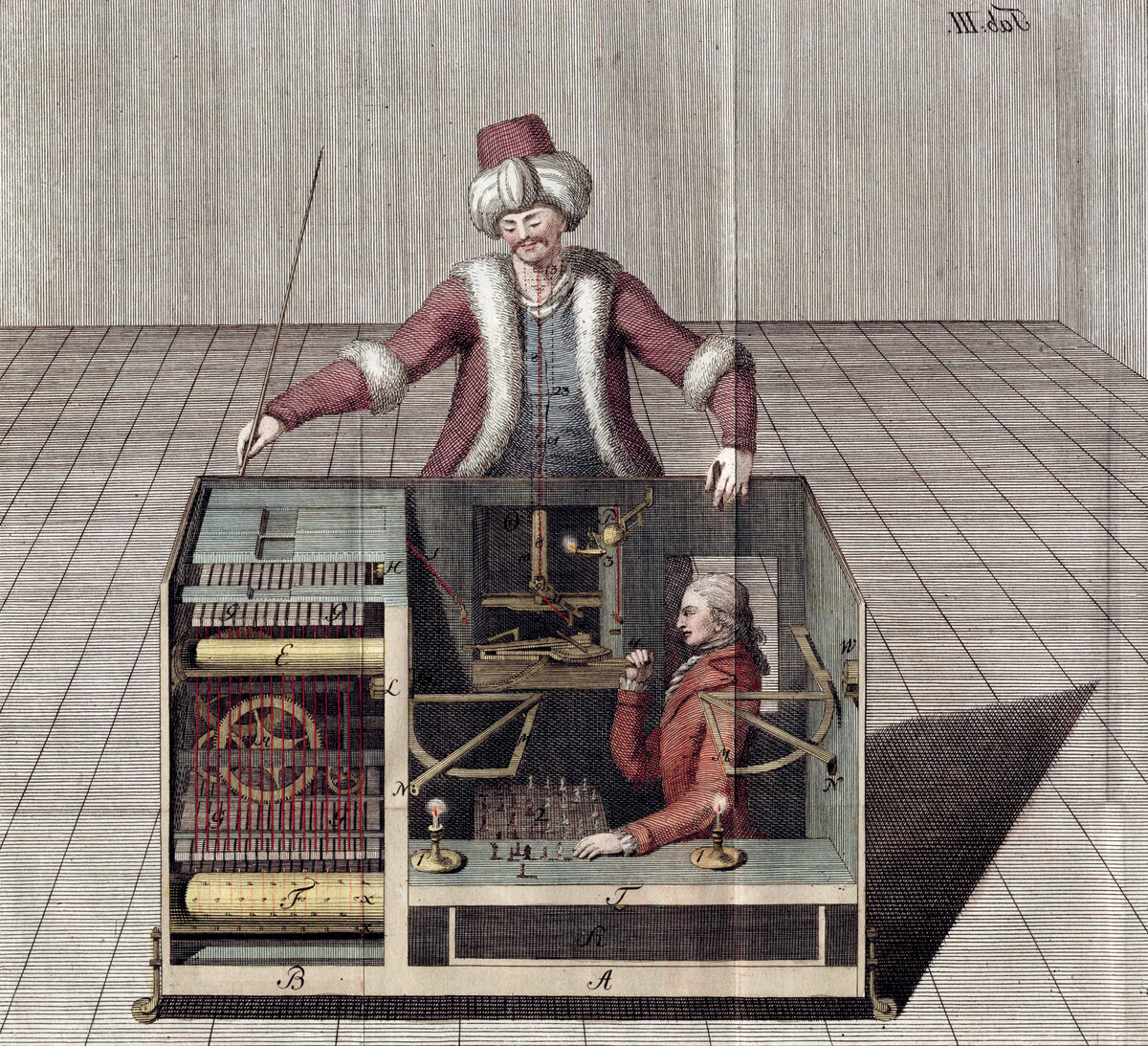

Joseph Racknitz, "The Turk" (1789). A diagram revealing the hidden operator inside Kempelen's famous chess-playing automaton. The machine that appeared autonomous was controlled by a person concealed inside. Public domain, via Wikimedia Commons.

The Benchmark That Misses the Point

Andrej Karpathy. Photo: Gladwin Analytics. CC BY 3.0, via Wikimedia Commons.

Andrej Karpathy, the former head of AI at Tesla and a founding member of OpenAI, noted in a February 2026 post that GPT-5.4 represents a step change in "agentic reliability" – the model's ability to follow multi-step instructions without losing the thread.[1] The numbers back him up. GPT-5.4 arrived in early 2026 with scores that made the agent-framework community pay attention. Seventy-five percent on the OSWorld computer-use benchmark, where the model operates a full desktop environment (browsing, clicking, typing, navigating file systems) like a remote employee who never sleeps.[2] Codex-level reliability on SWE-Bench, the industry standard for autonomous code patching.[3] A context window stretching to a million tokens, enough to hold an entire codebase and its commit history in working memory while the agent plans its next move.

The comparison to Anthropic's Claude models is instructive but narrow. Claude Sonnet 4.6 scores around 72.5 percent on similar computer-use tasks. Opus 4 reaches 72.7 percent.[4] The differences are real but marginal – a few percentage points on benchmarks that measure the ability to click the right button in a simulated operating system. On instruction-following, the models are converging. On coding reliability, they are within a rounding error. On cost, GPT-5.4 undercuts Claude for high-volume agent workloads by a meaningful margin.

The performance gap between frontier models is closing. The governance gap is widening.

The benchmarks measure what an agent can do. They do not measure who controls the loop, who stores the context, who owns the output, or what happens to the proprietary data that flows through the agent's million-token window on every run.

The entire conversation about agent frameworks (OpenClaw, Claude Code, Devin, Cursor, the proliferating ecosystem of plan-execute-inspect loops) is a conversation about capability. It has not yet become a conversation about sovereignty. That is about to change.

The Agent Loop, Explained to a Board

An AI agent is not a chatbot. A chatbot answers a question. An agent acts on instructions across multiple steps, using tools, inspecting results, and correcting course. The loop looks like this: the user provides a goal, the agent plans a sequence of actions, executes each action using tools (a code editor, a browser, a terminal, a database), inspects the output, decides whether to continue or revise, and repeats until the goal is met or the user intervenes.

OpenClaw, the open-source agent framework that has become a reference architecture for this pattern, chains these steps through a structured markdown protocol. Claude Code, Anthropic's first-party agent, does the same through a more tightly integrated interface. GPT-5.4's improvements in multi-step instruction following make it a strong fit for both. The model does not care which framework wraps it. It receives a prompt, produces an action, and waits for the next interaction.

The critical insight is not about which model runs the loop. It is about where the loop runs.

When Daniel's agent refactored the authentication service, the loop executed across four systems: his local machine, the OpenAI API, the Git remote, and the CI pipeline. The planning and reasoning happened on OpenAI's infrastructure. The proprietary context (the codebase, the architecture decisions, the specific vulnerability) was transmitted as API input. The output (the patch, the commit message, the reasoning trace) was generated on servers Daniel's company does not control.

Every agent run is a data pipeline. The pipeline flows outward.

Joseph Wright of Derby, "A Philosopher Lecturing on the Orrery" (c. 1766). Derby Museum and Art Gallery. A mechanical model of the solar system illuminates the faces of those gathered around it. Understanding the mechanism changes everything. Public domain, via Wikimedia Commons.

The Three Architectures

There are three ways to build an AI agent loop today. Each embeds a different answer to the question of who owns the thinking.

The Managed Cloud. The model runs on the provider's infrastructure. The agent framework calls the API. Every prompt, every response, every reasoning trace flows through the provider's servers. OpenAI, Anthropic, and Google all offer this model. It is the fastest to deploy, the easiest to scale, and the one that gives you the least control over your data. GPT-5.4 at 75 percent computer-use accuracy, Claude Opus at 72.7 percent, Gemini Pro at roughly the same – all of them available through a managed API, all of them processing your proprietary context on someone else's hardware.

This is what Daniel uses. It is what 90 percent of agent deployments use. This is the architecture that treats your competitive advantage as an API input.

Self-Hosted Models. Run the model yourself. Llama, Mistral, DeepSeek, Qwen – open-weight models that can be deployed on your own GPUs, in your own data center, under your own jurisdiction. The agent loop stays inside your perimeter. No API calls cross the boundary. The trade-off is capability: self-hosted models are powerful but they trail the frontier by months. You gain sovereignty. You lose the bleeding edge.

The Open Harness. This is the design that the benchmark conversation obscures. Instead of choosing between capability (cloud API) and control (self-hosted model), an open harness lets you connect to any model (cloud or local, open or proprietary) through a single interface you control. The harness handles the agent loop. The model provides the reasoning. The data stays in your environment because the harness does not phone home.

Sage.is AI-UI is built on this third architecture. It is an AGPL-3-licensed, self-hostable AI platform that connects to Claude, GPT-5.4, Gemini, Llama, Mistral, DeepSeek, or any OpenAI-compatible endpoint through a single workspace the organization owns. When the agent loop runs through Sage, the planning and reasoning still happen on the model provider's infrastructure (if you choose a cloud model), but the orchestration, the context management, the tool integrations, and the knowledge base all stay on yours.

The difference is not academic. When Daniel's company runs the same authentication refactoring through Sage connected to GPT-5.4's API, the model still processes the prompt – that is what an API does. But the agent loop's state, the accumulated context across runs, the proprietary knowledge base that informs the agent's decisions, and the reasoning traces that document what the agent did and why – all of that stays inside the company's perimeter. The Sage.is team does not retain conversations. They do not train on your data. They do not grant a third party rights to your intellectual property. The AGPL license means the code cannot be closed.

And when the CTO decides that the authentication service is too sensitive for any cloud API, the same Sage interface connects to custom self-hosted models running on a GPU in the server room.

The agent loop does not change. The model changes. The sovereignty stays.

The Cost of Not Choosing

James Grimmelmann. Photo: tvol. CC BY 2.0, via Flickr.

James Grimmelmann, a professor at Cornell Law School who studies digital property and AI governance, has argued that the legal frameworks for AI-generated output are still catastrophically unclear.[5] When an AI agent writes code, the ownership is genuinely unclear. The person who gave the instruction has a claim. So does the company that employs them, the model provider whose infrastructure produced the output, and the framework developer whose orchestration made it possible.

In the managed-cloud architecture, these questions are answered by terms of service – contracts drafted by the provider, accepted by clicking a button, and understood by almost nobody who uses the tool. OpenAI's current terms assert no ownership over outputs but reserve broad rights to inputs for "service improvement."[6] Anthropic's usage policy is more restrictive about training on business data but still routes all context through their infrastructure.[7] Google's Gemini terms vary by product tier in ways that require a lawyer to parse.

In the open-harness architecture, the questions are answered by who runs the infrastructure. If you own the harness and choose where the model runs, the output belongs to you by the same logic that makes a document you write on your own computer yours. The legal ambiguity does not disappear, but the factual control does not depend on someone else's terms of service.

The companies deploying agent frameworks today are making an architectural decision that will define their data sovereignty for years. Most of them do not know they are making it.

The European Signal

The European Union's AI Act entered full enforcement in 2025.[8] NIS2, the network and information security directive, imposes strict data-handling obligations on critical infrastructure providers.[9] Germany's Federal Office for Information Security (BSI) has published guidelines specifically addressing AI systems that process proprietary industrial data.[10] The direction is unambiguous: sovereign infrastructure for sovereign data.

GAIA-X, the European cloud sovereignty initiative, is building a framework for federated data infrastructure where organizations retain control over their data even when using shared computing resources.[11] Detlef Schmuck, the managing director of TeamDrive Systems, has argued that cloud-based AI tools represent a fundamental threat to European industrial competitiveness. "If the server operator can read the data, the data is not secure," Schmuck told a Hamburg technology conference in January 2026.[12] "And if the data is not secure, the competitive advantage it represents is already gone." TeamDrive has built its entire business on the principle that the server operator cannot access the content – a principle that becomes existentially important when the server operator is also training the next version of the AI model.

These are not hypothetical concerns. Bosch, Siemens, and BMW are building private AI infrastructure not because they distrust the technology but because they understand that the technology works too well. An agent that can refactor an authentication service can also memorize its architecture. A model that can optimize a machining sequence has, by definition, processed the trade secrets that define it. The question is not whether AI agents are capable enough to be useful. They crossed that threshold in 2025.

The question is whether the infrastructure that runs them is designed to protect or to extract.

What the Benchmarks Will Never Tell You

GPT-5.4 at 75 percent computer use. Claude Opus at 72.7 percent. Sonnet at 72.5 percent. The numbers will keep climbing. By the end of 2026, every frontier model will be capable of running sophisticated agent loops (planning, coding, browsing, deploying, patching) with reliability that approaches and eventually exceeds the median human developer on routine tasks.

The benchmark that matters is not on any leaderboard. It is the percentage of your proprietary context that leaves your infrastructure on every agent run. For Daniel's company, using the managed-cloud architecture, that number is 100 percent. Every prompt, every codebase snippet, every vulnerability, every architectural decision flows outward. For the same company using an open harness connected to the same model, the number depends on what they choose. Cloud API for routine work, self-hosted model for sensitive workloads, full context never leaving the building.

The agent race is real. GPT-5.4 is genuinely impressive. Claude Opus is genuinely impressive. The open-weight models are catching up faster than anyone expected. But the race everyone is watching (which model scores highest on which benchmark) is the wrong race.

The race that matters is the one between the organizations that own their agent infrastructure and the organizations that rent it. The models are converging. The infrastructure question is diverging. And the window for choosing the right architecture is closing faster than the window for choosing the right model.

The Loop Belongs to Someone

On a Thursday morning in Portland, Daniel Reeves watched an AI agent fix a race condition in four minutes. The code was clean. The tests passed. The merge was approved.

Somewhere in Iowa, on servers he will never visit, the architecture of his company's authentication system exists as a pattern in a model's context window. The vulnerability that cost $200,000 last November (the one that only his team knew about) has been described, analyzed, and solved by a system that serves every other company using the same API.

Daniel's agent is impressive. Daniel's agent is capable. Daniel's agent is not his.

The loop belongs to someone. The only question is whether it belongs to you.[13]

The views expressed are those of the editorial board and do not necessarily reflect the positions of any institution mentioned. Full disclosure and transparency is a feature, not a bug.

Andrej Karpathy, post on X/Twitter, February 2026, discussing GPT-5.4 agentic reliability improvements. ↩︎

OSWorld computer-use benchmark. GPT-5.4 score of 75% on full desktop environment operation tasks. ↩︎

SWE-Bench, the industry-standard benchmark for autonomous code patching. OpenAI technical report, 2026. ↩︎

Anthropic model card and benchmark disclosures. Claude Sonnet 4.6: 72.5%, Claude Opus 4: 72.7% on computer-use tasks. ↩︎

James Grimmelmann, Cornell Law School. Published work on digital property, AI governance, and copyright in AI-generated output. ↩︎

OpenAI Terms of Use, Section 3: "Content." Accessed March 2026. ↩︎

Anthropic Usage Policy and Commercial Terms of Service. Accessed March 2026. ↩︎

European Union Artificial Intelligence Act (Regulation 2024/1689), full enforcement 2025. ↩︎

NIS2 Directive (Directive 2022/2555) on network and information security for critical infrastructure. ↩︎

BSI (Bundesamt fur Sicherheit in der Informationstechnik), guidelines on AI systems processing proprietary industrial data, 2025. ↩︎

GAIA-X European Association for Data and Cloud, federated data infrastructure framework. gaia-x.eu. ↩︎

Detlef Schmuck, Managing Director, TeamDrive Systems GmbH. Remarks at Hamburg technology conference, January 2026. ↩︎

See also in this series: "The Thinking Layer" examines how open-weight reasoning models have made the sovereignty question urgent — the chain-of-thought is the new crown jewel. "The Bridge Protocol" documents how AGPL-licensed tools can interoperate with proprietary systems through MCP without surrendering sovereignty. "The Prompt You Thought Was Private" reveals how tracking scripts in AI tools transmit prompt content to third parties even when privacy settings are enabled. ↩︎

Sage.is

Sage.is